“We need AI.” It’s the phrase we hear most in first meetings with companies that contact us. And our first question is always the same: what for?

It’s not rhetorical. It’s genuine. Because in our experience, at least half the companies that “need AI” actually need something else. And that something else is usually cheaper, faster to implement, and more effective.

The 4-question framework

After years evaluating projects, we distilled a simple framework to determine if AI is the right tool for a problem:

1. Is the problem repetitive?

AI shines at tasks done many times with variations. If the process happens once a month, it probably doesn’t justify the AI investment. A good documented procedure is enough.

If the process happens hundreds or thousands of times a day, now we have an interesting conversation.

2. Do you have data about the problem?

Not data in general. Data specific to the problem you want to solve. If you want to predict which customers will churn, you need historical cancellation data with enough variables for a model to find patterns.

“We have lots of data” isn’t an answer. Data about what? In what format? How clean? How complete? These questions determine whether AI is viable or whether you first need to invest in data infrastructure.

3. Is the cost of error manageable?

Every AI model makes mistakes. The question is whether your context tolerates those mistakes. Classifying emails into categories with 5% error is acceptable. Approving credit with 5% error can be catastrophic.

This doesn’t mean AI can’t be used in high-risk contexts. It means it needs human oversight at critical decision points. System design changes completely based on error tolerance.

4. Can you solve it with rules?

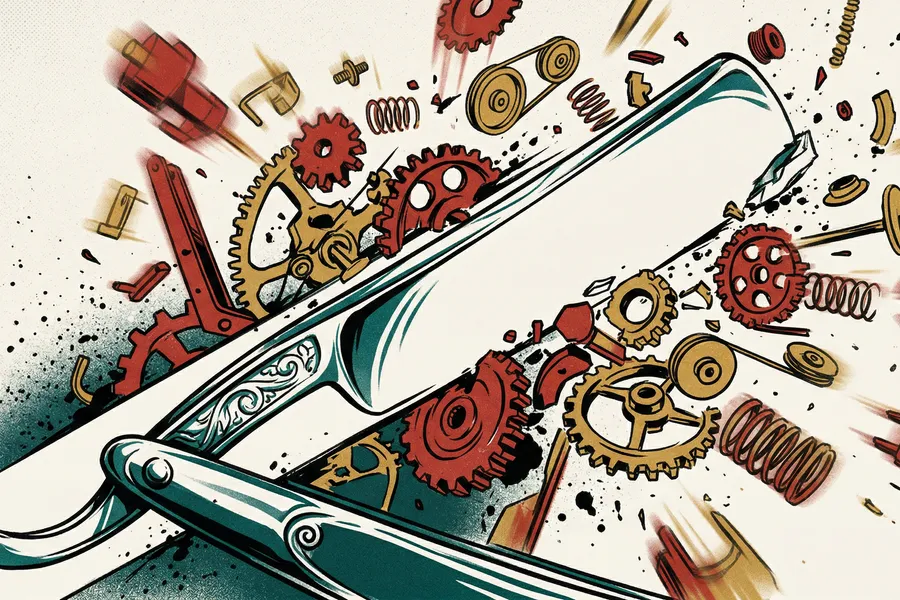

This is the question many want to skip. If you can write a set of if/then rules that solve 90% of cases, you probably don’t need AI. You need a good rule-based system.

AI is for problems where rules don’t scale. Where there are too many variables, too many combinations, or too much ambiguity to code manually. If you can define it with boolean logic, do it. It’s cheaper, more predictable, and easier to maintain.

In philosophy of science they call it Occam’s Razor: given two explanations that produce the same result, choose the simpler one. The same applies to technical solutions. Given two tools that solve the same problem, choose the less complex one.

The 3 most common scenarios

Scenario A: “We want AI but don’t have data.” Recommendation: invest in data capture and organization first. Define what you need to measure, implement instrumentation, and after 6-12 months evaluate AI with real data. It’s not the answer they want to hear, but it’s the honest one.

Scenario B: “We have data and a clear problem, but don’t know where to start.” Recommendation: a scoped pilot project. Choose a specific sub-problem, build a proof of concept in 4-6 weeks, and measure results against the current process. If the numbers work, scale. If not, you learned something valuable without betting everything.

Scenario C: “We already tried AI and it didn’t work.” Recommendation: before trying again, understand why it failed. 80% of AI failures we’ve seen aren’t about the technology. They’re about insufficient data, misaligned expectations, or poorly defined problems. Fix the root cause before iterating on the solution.

What most consultancies won’t tell you

There’s a structural conflict of interest in the AI consulting industry: if they tell you that you don’t need AI, they lose the project. This creates a perverse incentive to find AI use cases where they don’t necessarily exist.

At Redstone Labs we handle it differently. If the evaluation indicates you don’t need AI, we tell you. And we help with what you actually need, even if it’s less exciting. Because we’d rather have a client who trusts us and comes back than an AI project that should never have existed.

Not every business problem is solved with AI. But every business problem can be honestly evaluated. That’s what we do.